Large language models (LLMs) aren’t actually giant computer brains. Instead, they are effectively massive vector spaces in which the probabilities of tokens occurring in a specific order is encoded. Billions of parameters, times N bits per parameter, equals N-billion bits of storage required for a full model. Since increasing the number of parameters makes the models appear smarter, most effort on reducing the storage they require has been on reducing the size of the parameters themselves.

Vector quantization (VQ) is a new method that can compress the vectors calculated during inference to take up less space without significant loss of data. Google’s recently published pre-print paper on TurboQuant covers an LLM-oriented VQ algorithm that’s claimed to provide up to a 6x compression level with no negative impact on inference times.

The tokens aren’t directly encoded in the vector space, but their associated key value is, which along with the single token per inference process creates the need for a key-value (KV) cache, the size of which scales with the size of the model. Thus by compressing the KV cache using VQ, it will reduce its size and correspondingly speed up look-ups due to the smaller size in memory. One catch here is that VQ is due to the nature of quantization some accuracy will be lost. The trick here is thus to apply VQ in such a way that it does not affect this accuracy in a noticeable manner.

Other aspects that had to be taken into account by the TurboQuant algorithm was fast computation to keep up with real-time requirements, along with compatibility with so-called ‘AI accelerator’ hardware.

Key-Value Cache

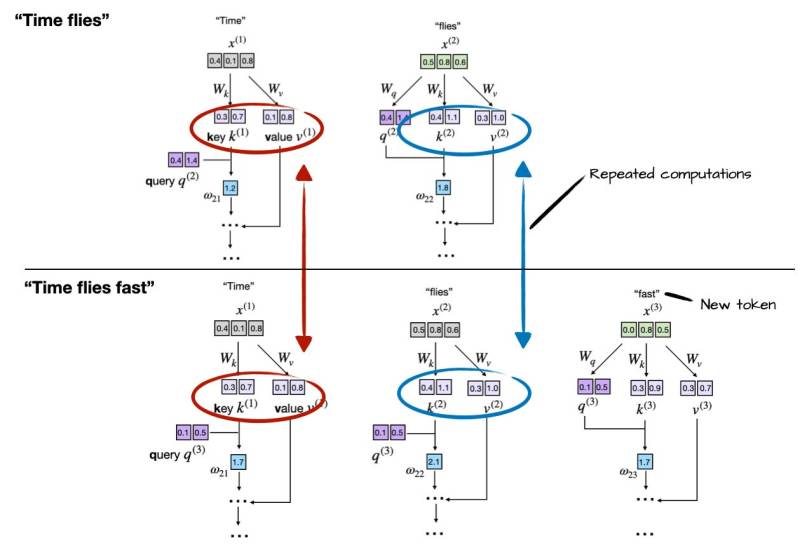

A basic way to look at the KV cache in LLMs is that it caches the results of previous inference cycles. An in-depth explanation can for example be found in this article by Sebastian Raschka. In the case of generating a phrase of three words starting with the word ‘Time’, we can see the following repeated computations:

Considering that inference is rather expensive computation-wise, you really want to cache these calculated values. This provides a massive boost in performance and much lower CPU load, but because there’s no such thing as a free lunch the catch here is a rapidly increasing memory usage.

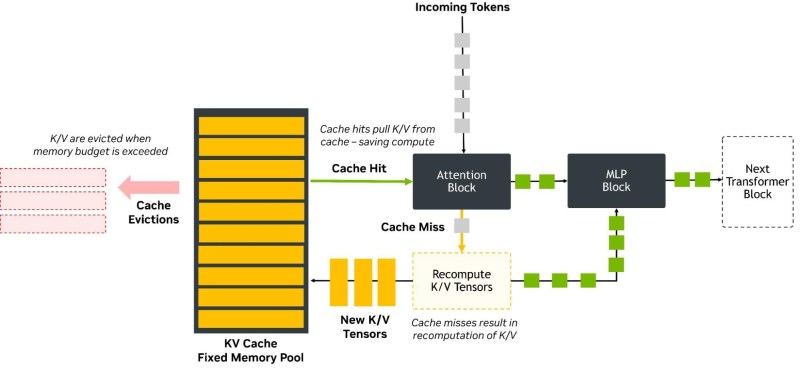

Correspondingly, we now have a big in-memory cache to manage, along with memory management routines to make sure that the KV cache doesn’t exceed its allocated memory pool:

As covered in a December 2025 NVIDIA Developer article, KV cache optimization has been a topic for a while, with the article in question covering NVFP4. This is a VQ approach that reduces the precision of the KV cache from 16-bit floating point to 4-bit (FP4). Meanwhile production systems already employ 8-bit quantization, also using a floating point format (FP8).

An additional cost here is that FP4 has to be dequantized back to FP8, which would seem to be an implementation detail in the current version. Compared to FP8 quantization, FP4 reduces latency by up to 3 times and halves the required memory required, while accuracy is negatively impacted by ‘less than’ 1% compared to FP8 due to quantization error.

Accuracy here is important as it factors into the next auto-complete step when the LLM’s probability vector space is once again rummaged through for the next statistically most likely follow-up token. KV cache VQ compression is thus always a trade-off between memory use and accuracy. In short, the same issues apply as with all implementations of quantization-based compression, including the tragic absence of any free lunch.

Turbo Quantization

So what magic did Google’s intrepid engineers pull off to improve on NVIDIA’s NVFP4 approach? The key is in how the quantization is performed, as it isn’t simple a matter of truncating or throwing away data, rounding up to the nearest available value. Instead a series of steps are applied that seek to minimize the quantization error, which in the case of TurboQuant is (confusingly) an algorithm called PolarQuant followed by the QJL (quantized Johnson-Lindenstrauss) algorithm.

Annoyingly for the non-mathematically gifted/educated among us, Google didn’t simply provide a straightforward visualization like that for NVFP4 that’s understandable even for us software developers and other casuals. For NVIDIA’s format we can see that it takes the form of a single sign bit, two exponents and one mantissa (E2M1), as well as a shared FP8 scale per block of 16 values.

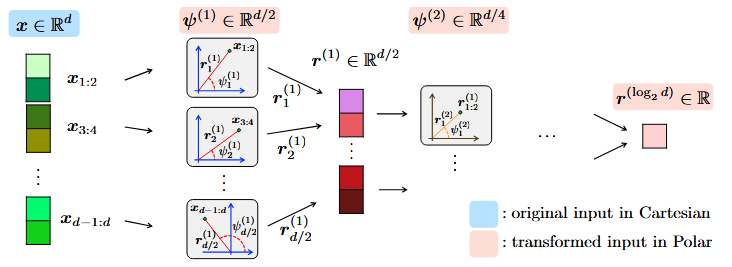

One step where TurboQuant appears to be differ is in the PolarQuant algorithm, that applies a polar coordinates transformation to the vectors, following which a typical normalization can apparently be skipped.

This polar transformation is preceded by the application of a random projection matrix as a type of preconditioning that will affect later normal distribution, with proof and the full algorithm provided in the PolarQuant arXiv paper for those who desire more detail.

Of note is that PolarQuant employs the Johson-Lindenstrauss lemma, which Google researchers used as the basis for a JL-based transform called QJL. From reading the blog post it’s not immediately clear whether QJL is directly integrated into PolarQuant or an additional step, due to the muddled messaging on Google’s end. From the benchmarking results it does appear that QJL is an additional step.

What we know is that the final format that TurboQuant ends up with is three-bit value, which would logically be 1 bit smaller than NVFP4, or an approximate 25% smaller KV cache for the same amount of data.

Judging On Merits

Comparison and benchmark data in the Google blog post and associated papers do not provide direct comparisons with NVFP4, and the few numbers that are thrown out are rather inconsistent, or unspecified. Take the claim of ‘at least 6x smaller memory size’, for example. The blog text does not clearly specify what this is relative to, while it then tosses out a 4-bit TurboQuant number of 8x performance increase compared to FP32.

Although with some more digging and poking of the available data it might be possible to glean some actual performance information from the provided files, it’s rather vexing how vague Google’s messaging is kept. Not to mention the lack of direct benchmarking against what would be the biggest competitors in the space.

It is definitely true that VQ is a thing for LLM KV cache compression, as we have seen, and NVIDIA ‘accelerator cards’ provide hardware acceleration for this feature, so this is the reality that TurboQuant would have to compete with. Based on the few clear facts that we do have it doesn’t appear that it’s quite the revolution that the hype machine has made it out to be, with it likely being just a bump over NVFP4 that NVIDIA is likely to trump again with its next quantized format.

It will of course be most interesting to see how this will play out once TurboQuant makes its way out of the laboratory into the wider world and we start seeing independent benchmarking performed.

No comments:

Post a Comment